Thermal Design Power

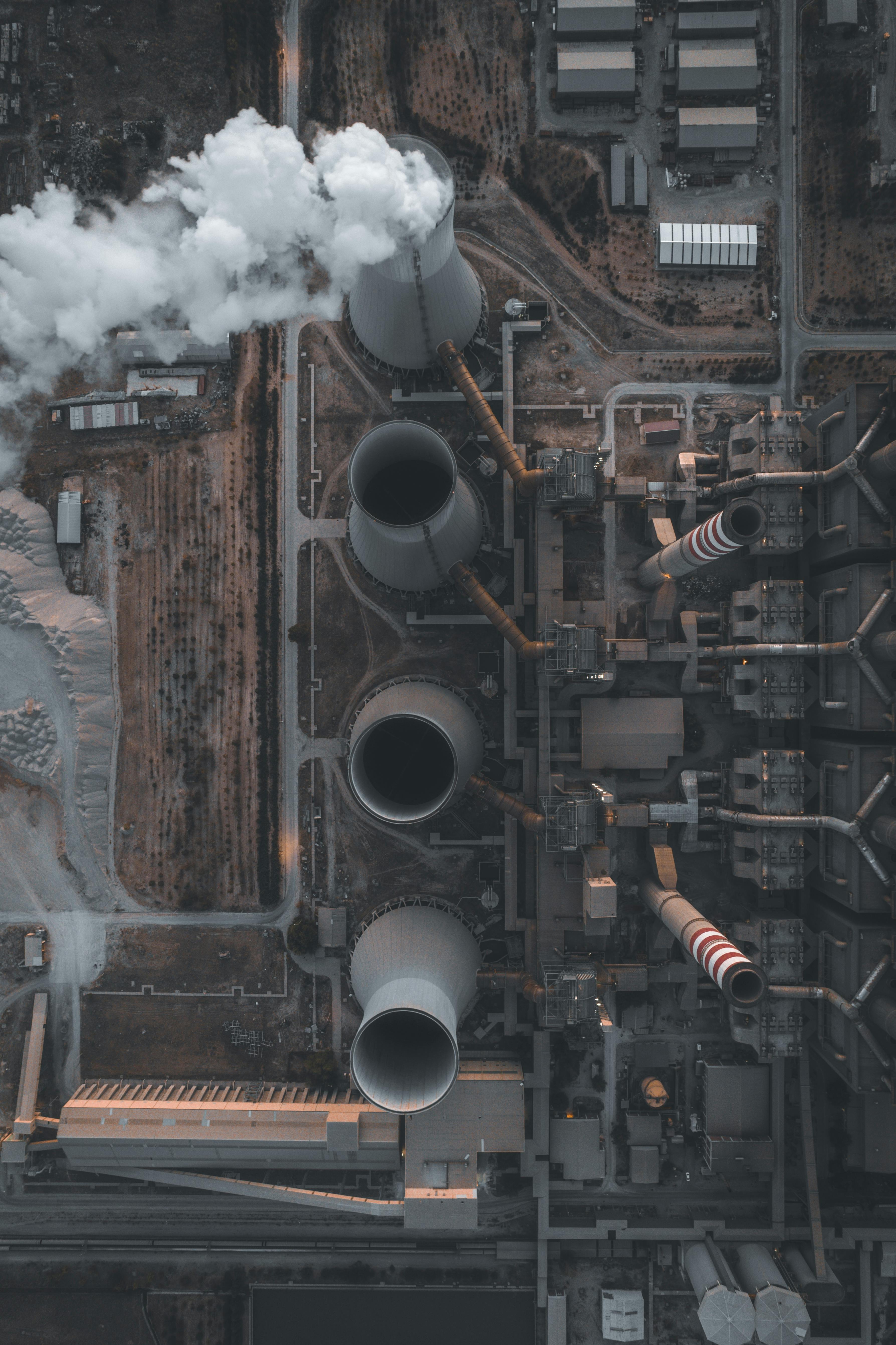

For decades, air cooling reigned supreme as the undisputed king of data center thermal management. However, the explosive growth of Artificial Intelligence (AI) and High-Performance Computing (HPC) has fundamentally changed the rules of thermodynamics in the server room. As specialized accelerators—like NVIDIA’s Blackwell series—push Thermal Design Power (TDP) beyond 1,000W per chip, traditional air-cooling methods are hitting a physical "thermal wall."

The resulting conflict is not just about temperature; it’s about density, efficiency, and the economic viability of next-generation AI infrastructure. The future is being decided in a direct battle between familiar air-based methods and advanced liquid solutions.

For decades, air cooling reigned supreme as the undisputed king of data center thermal management

Air Cooling

The Incumbent’s "Thermal Cliff"

Air cooling relies on the movement of vast volumes of air to pull heat away from components. While reliable and simple to implement, its limitations at AI scales are no longer linear; they are catastrophic.

The Physics of Airflow

To cool a modern 100kW AI rack using air, a facility would need to move approximately 15,000 cubic feet of air per minute (CFM). For context, that is equivalent to hurricane-force winds moving through the narrow intakes of a server.

-

The Fan Power Law: This is the "hidden tax" of air cooling. Fan power consumption scales with the cube of the fan speed. To double the airflow to handle a denser GPU, you don't just double the power; you increase it eightfold.

-

The Throttling Penalty: When air cooling fails to keep a GPU below its thermal limit (typically 80°C), the chip engages Dynamic Voltage and Frequency Scaling (DVFS). In plain terms, the chip slows itself down to avoid melting. Recent benchmarks show that air-cooled H100 systems can lose up to 17% of their computational throughput compared to liquid-cooled counterparts during sustained AI training runs.

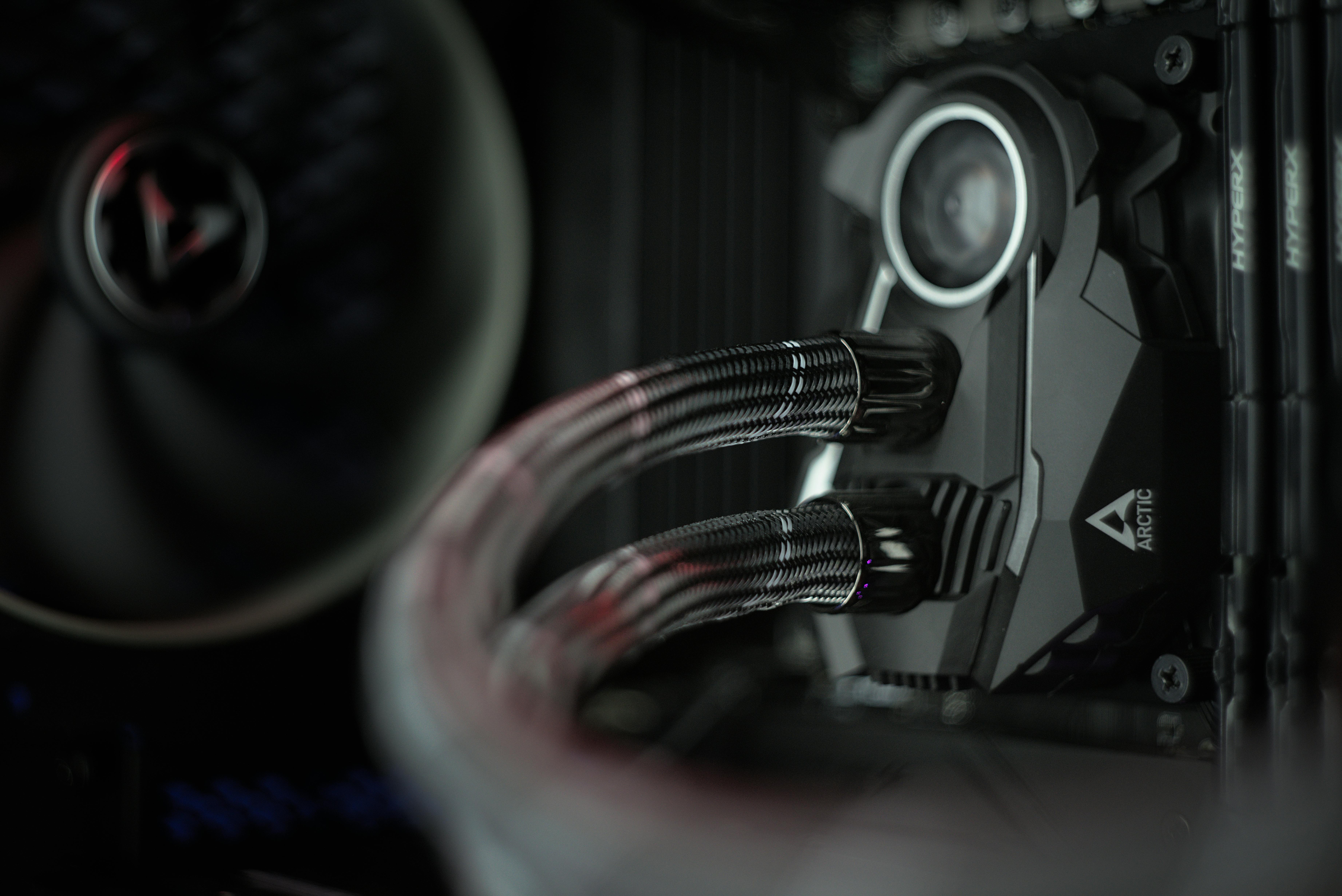

Liquid Cooling

The Density Disruptor

Liquid is over 3,000 times more effective at transferring heat than air. This fundamental physical advantage allows data centers to support the ultra-high-density needs of AI without massive, energy-hungry fan arrays.

Primary Liquid Solutions:

|

Method |

Mechanism |

Capacity |

Strategic Use Case |

|

Direct-to-Chip (DTC) |

Fluid runs through cold plates mounted directly on the GPU/CPU. |

50kW - 80kW |

Retrofitting existing air-cooled facilities for AI. |

|

Immersion Cooling |

Entire servers are submerged in non-conductive dielectric fluid. |

100kW - 200kW+ |

Greenfield AI factories requiring maximum density. |

|

Rear Door Heat Exchangers |

A liquid-filled radiator on the rack door captures heat before it leaves. |

20kW - 50kW |

Transitioning legacy racks to higher densities. |

The Battle for Efficiency

Decoding PUE

In the era of power scarcity, Power Usage Effectiveness (PUE) is the metric that dictates profitability. Liquid cooling provides a massive advantage by shifting the cooling load from high-RPM server fans to centralized, efficient fluid pumps.

-

Air Cooling (PUE 1.4 - 1.6): A significant portion of every watt entering the facility is wasted on moving air through chillers, CRAC units, and server fans.

-

Liquid Cooling (PUE 1.02 - 1.1): By removing heat directly at the source, liquid systems can often utilize "warm water cooling." This means the facility can reject heat to the outside environment using simple dry coolers rather than energy-intensive mechanical chillers, even in warm climates.

Operational Longevity and Reliability

Operational Longevity and Reliability

Beyond raw performance, the transition to liquid cooling is a matter of asset protection.

- Extended Hardware Lifespan: Research indicates that every 10°C reduction in operating temperature can effectively double a semiconductor's lifespan. Liquid cooling typically keeps GPUs in the 45°C–55°C range,whereas air-cooled chips often hover near their 75°C–80°C thermal limit.

- Silence and Stability: Eliminating fans removes two major failure points: mechanical wear from vibration and dust accumulation. This leads to a more stable environment for high-value AI clusters, where a single node failure can halt a training job costing hundreds of thousands of dollars.

The Hybrid Reality

The Hybrid Reality

The transition to liquid will not happen overnight. Most "AI-Ready" data centers today are adopting a Hybrid Model. In this setup, liquid cooling handles the 70–80% of heat generated by the GPUs and CPUs, while a reduced air-cooling system manages the "ancillary" heat from memory modules, power supplies, and storage drives.

This allows operators to hedge their bets—gaining the density required for AI while maintaining the flexibility of air for general-purpose enterprise workloads.

Conclusion: A New Standard for the AI Era

The battle is effectively over for high-density, AI-focused infrastructure. While air cooling remains suitable for standard web servers and low-density enterprise apps, it has reached its physical limit for the AI era.

Liquid cooling is no longer a luxury for overclockers or supercomputing labs; it is the mandatory foundation for the global AI factory. It offers the only sustainable path to manage the heat of tomorrow's processors, maximize the ROI of expensive GPU clusters, and achieve the radical energy efficiency required to power the digital future.

The battle is effectively over for high-density, AI-focused infrastructure

Further Reading

Related insights

Expert analysis, market trends, and professional guidance from the world's leading data center specialists.

Explore All Further Reading

Access comprehensive expert analysis, real-time market intelligence, actionable career guidance, and in-depth technical insights from DC Forté's global community of data center specialists, industry thought leaders, and Advisory Board members. New insights published weekly across careers, technology, market trends, and sustainability topics.

Ready to Lead, Not Follow?

Join the professionals who read it on Forté IQ first.

Trusted by 150,000+ data center professionals worldwide